How does attention-economy-driven algorithmic amplification of conflict-driven and negative-emotional communication distort public discourse? And does this distortion constitute a systemic risk under the Digital Services Act (DSA)? This blog article refers to our previous blogpost on Platform Badges for Civic Communication, explains why such interventions are needed, and outlines how they could address these systemic risks.

by Jan Rau

Digital platforms shape political communication through ranking, recommendation, and engagement-based optimization; these systems do not merely mirror public debate but actively determine which forms of political expression receive attention at scale. Within this environment, conflict-driven and negative-emotional communication tends to occupy a structurally advantaged position. In our recent paper Platform Badges for Civic Communication, we argue that, under the DSA, this dynamic gives rise to systemic risks to civic discourse as understood in Articles 34 and 35.

Systemic Risk under Articles 34 and 35 DSA

Articles 34 and 35 DSA require very large online platforms to identify, assess, and mitigate systemic risks arising from the functioning and design of their services. Particularly relevant is the systemic risk outlined in Art. 34(1)(c) DSA, which covers “any actual or foreseeable negative effects on civic discourse and electoral processes, and public security.” This is the provision we primarily refer to when outlining the harmful impact of the structural overrepresentation of conflict-driven, negative-emotional communication and its societal effects.

The Digital Attention Economy

Central to this argument is the intensification of the digitally mediated attention economy, in which communicators – both established and emerging – compete for limited human attention amid an ever-increasing volume of information. To maximize engagement, communicators and platforms employ digital tracking and analytics systems that fine-tune content to align with user behavior and algorithmic preferences.

Antagonism and Outrage as Structurally Privileged Forms of Communication

Empirical research shows that content emphasizing group identity, emotional conflict, and antagonism – “us versus them” framing rather than solution-oriented debate – achieves especially high visibility online. Rather than echo chambers alone, sustained and often unmoderated confrontation across political groups, supported by interconnected digital spaces, underpins contemporary polarization.

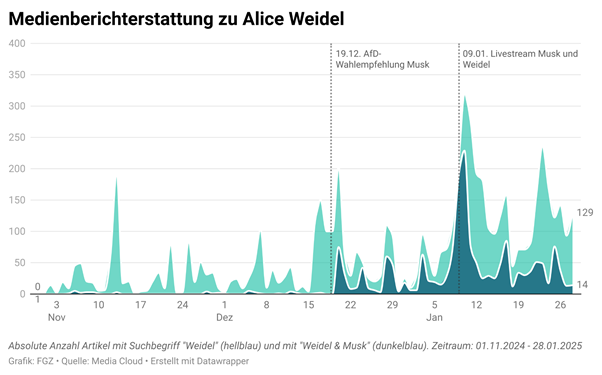

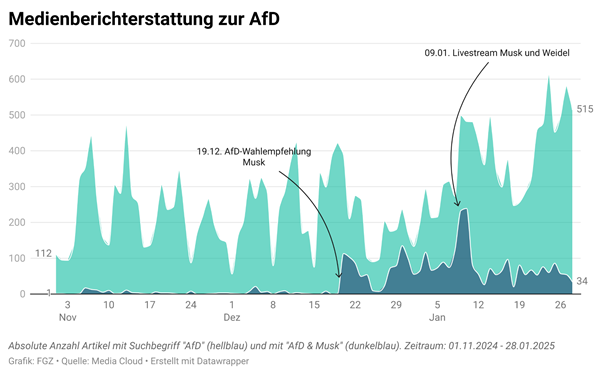

Because conflict-laden, negative-emotional communication is effective at capturing attention and mobilizing support, many actors deliberately amplify hostile, antagonistic discourse. For authoritarian actors in particular, such styles are central to communicative success. In this context, digital platforms are especially problematic: driven by attention-maximizing logic, ranking and recommendation systems frequently reinforce engagement-generating, conflict-oriented discourse. Over time, this produces a structural bias in favor of antagonism, escalation, moral polarization, and emotional outrage.

Societal Implications

Although the amplification of emotionally charged and conflict-driven content has received comparatively little attention in platform governance debates, its implications for democratic societies are substantial. A growing body of evidence links its prevalence to hyperpolarization, including the erosion of democratic norms and mutual toleration. Hyperpolarization, in turn, can foster political gridlock, weaken collective responses to societal challenges, create openings for authoritarian encroachment, and escalate into political violence. Given these effects and the success of certain actors in exploiting digitally amplified antagonistic discourse, the amplification of such content poses a systemic risk to civic discourse, electoral integrity, and public security, as articulated under Article 34 DSA.

The Importance of Political Conflict

It is important to underline that political conflict, emotional expression, and polarization are not inherently harmful but are central to pluralistic democracy, particularly for marginalized groups seeking visibility and collective identity. Attempts to suppress conflict through enforced consensus risk obscuring underlying injustices rather than addressing them, and efforts to curb polarization without engaging its roots may simply mute legitimate dissent. However, while political conflict in itself is not problematic (quite the opposite, it is necessary and intrinsic to politics), its constant digital overrepresentation has become harmful, fostering hyperpolarization and opening the door to authoritarian actors, as outlined above.

Risk Mitigation Through Platform Badges

Overall, the structural overrepresentation of conflict-driven, negative-emotional communication – and its documented societal effects – can indeed be understood as constituting a systemic risk to civic discourse, electoral processes, and public security, thereby requiring appropriate risk-mitigation measures.

Measures such as the proposed platform badges for civic communication can serve as effective risk-mitigation mechanisms. These badges operate as governance-by-design interventions: they create positive incentives for communicators who adhere to democratic communication norms and, in return, receive increased visibility on platforms. Rather than restricting content, badges aim to rebalance attention distribution by selectively amplifying norm-compliant communication. This shifts the regulatory focus from isolated content-moderation decisions to the structural communicative effects produced by platform architectures and design choices – effects that ultimately shape which forms of political expression dominate the digital public sphere.

Conclusion

Conflict and emotion are not incompatible with democratic communication. However, when platform systems consistently privilege conflict-driven negative emotionality, they introduce a structural bias that reshapes civic discourse at scale and to a problematic degree. Recognizing this dynamic as a systemic risk under Articles 34 and 35 DSA and advocating for appropriate risk mitigation measures – such as platform badges – is therefore essential.

A rainbow holds not only scientific significance but also rich symbolism. In many cultures and myths, the rainbow represents hope, peace, and a connection between heaven and earth. In modern times, the rainbow has gained strong symbolic importance as a sign of diversity and acceptance. The LGBTQ+ community has adopted the rainbow as a symbol for their movement to celebrate and protect the diversity and rights of all individuals.

A rainbow holds not only scientific significance but also rich symbolism. In many cultures and myths, the rainbow represents hope, peace, and a connection between heaven and earth. In modern times, the rainbow has gained strong symbolic importance as a sign of diversity and acceptance. The LGBTQ+ community has adopted the rainbow as a symbol for their movement to celebrate and protect the diversity and rights of all individuals.